A 3D camera for safer autonomy and advanced biomedical imaging

Researchers demonstrated the use of stacked, transparent graphene photodetectors combined with image processing algorithms to produce 3D images and range detection.

Researchers demonstrated the use of stacked, transparent graphene photodetectors combined with image processing algorithms to produce 3D images and range detection.

Researchers at the University of Michigan have proven the viability of a 3D camera that can provide high quality three-dimensional imaging while determining how far away objects are from the lens. This information is critical for 3D biological imaging, robotics, and autonomous driving.

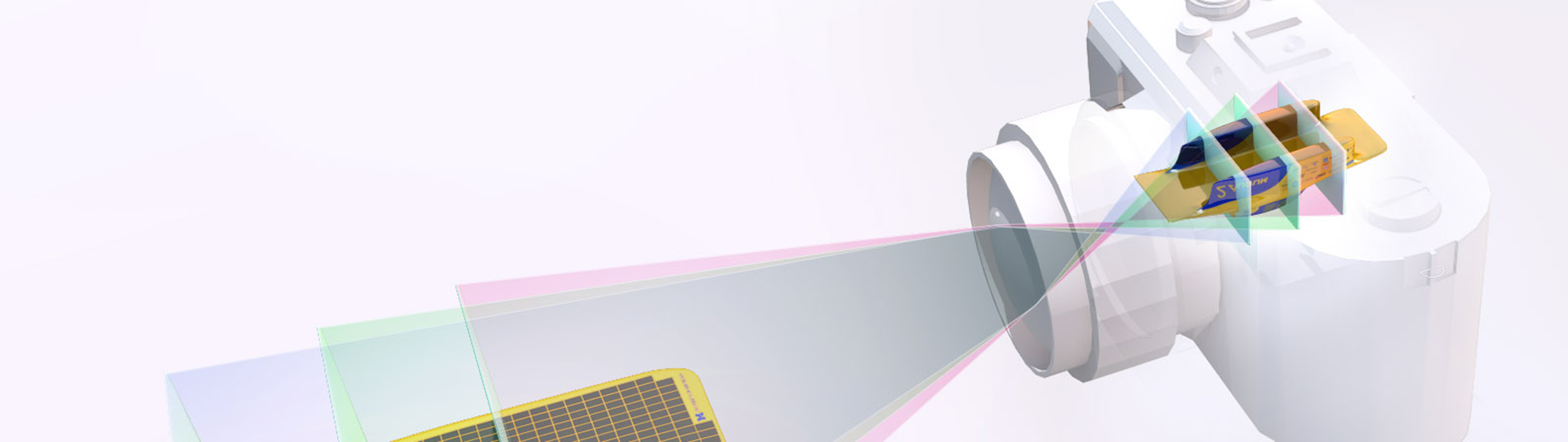

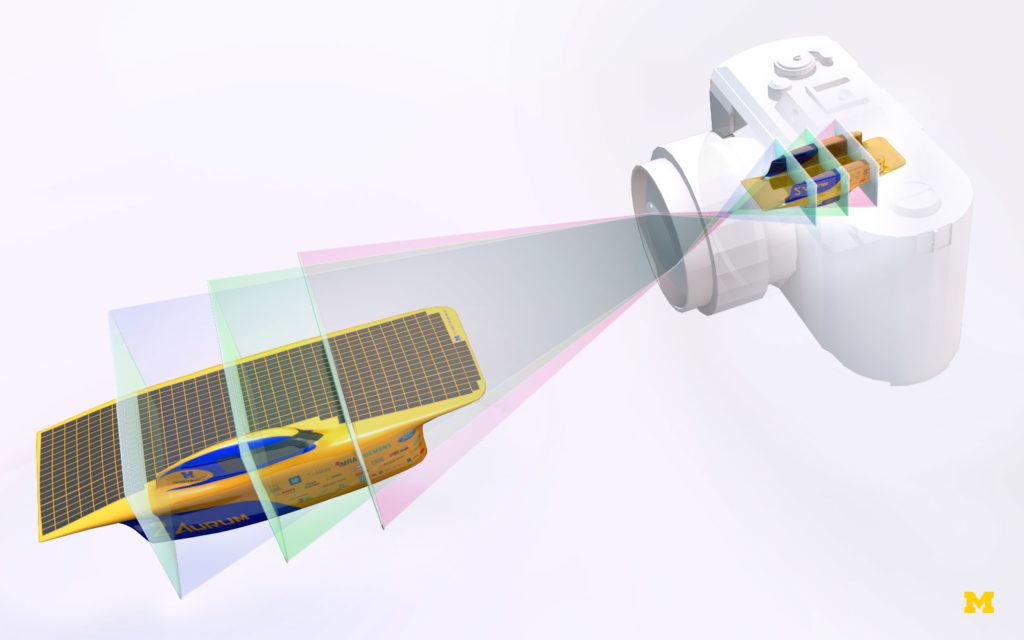

Instead of using opaque photodetectors traditionally used in cameras, the proposed camera uses a stack of transparent photodetectors made from graphene to simultaneously capture and focus in on objects that are different distances from the camera lens.

The system works because of the unique traits of graphene, which is only one atomic layer thick and absorbs only about 2.3% of the light. A pair of graphene layers can be used to construct a photodetector that can efficiently detect light, even though less than 5% of the light is absorbed. When placed on a transparent substrate, instead of a silicon chip for example, the detectors can be stacked, with each one in a different focal plane.

As described by Prof. Ted Norris:

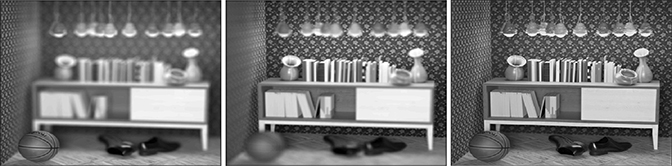

“When you have a camera, you have to have a focusing adjustment on your lens so that when you’re focusing on a particular object like a person’s face, the rays of light that are coming from that person’s face are focused onto that single plane on your detector chip. Items in front or behind the object are out of focus.

But if it were possible to stack different detector arrays each in different focal planes, then they could each image accurately a different place in the object space simultaneously. What’s more, if you can detect multiple focal planes of data all at the same time, you can use algorithms to reconstruct the object in three dimensions. That is called a light field image.

We have demonstrated how to use transparent focal stacks to do light field image and image reconstruction.”

In addition to basic object identification, the current paper shows how their device can detect how far away something is – making it suitable for applications in autonomous driving and robotics. It is also ideal for biological imaging in cases where it is important to image three-dimensional volume.

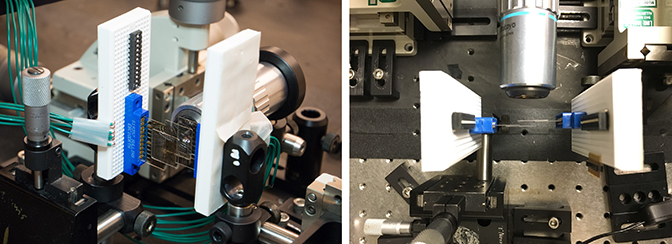

For its ultimate success, the project required complementary expertise in three areas. Prof. Zhaohui Zhong’s team developed the graphene devices; Norris’ group worked on the design features of the optical instrument and demonstrated the devices in the lab; and Prof. Jeff Fessler’s group, which developed the image reconstruction algorithm.

Fessler echoed the other faculty in stating the group of nine researchers consisting of faculty, postdocs and students “coalesced as a great team, all learning from each other and contributing different aspects of the final paper.”

Inspiration for the camera came from previous research of Zhong and Norris on highly sensitive graphene photo detectors, published in Nature Nanotechnology in 2014.

The current transparent graphene sensors fabricated so far are too low-resolution to depict images, but the initial experiments showed that the lens focused light from a different distance on each of the two sensors.

Work is continuing on the project.

The paper, “Ranging and Light Field Imaging with Transparent Photodetectors,“ by Miao-Bin Lien, Che-Hung Liu, Il Yong Chun, Saiprasad Ravishankar, Hung Nien, Minmin Zhou, Jeffrey A. Fessler, Zhaohui Zhong, and Theodore B. Norris, was published in Nature Photonics.

The initial funding for development of the photodetector came from the National Science Foundation. Development of the transparent detector, image reconstruction, and ranging demonstration came from the W.M. Keck Foundation, which focuses on emerging research with the potential for transformative impact. Devices were fabricated in the Lurie Nanofabrication Facility at the University of Michigan.

See also: A better 3D camera with clear, graphene light detectors

Catharine June

ECE Communications and Marketing Manager