In our image

How and why we make machines that move like us.

How and why we make machines that move like us.

Jonathan Hurst likes to go running at night. It’s harder to see the ground then – harder to make out the roots, gullies and sidewalk lips and bound over them without tripping.

“I do it to understand the robots better,” he says.

Hurst is assistant professor of mechanical engineering at Oregon State University. He designs machines that walk and run on two legs, and do it without vision sensors. Going for 9 p.m. jogs helps him make sense of how his own body can navigate rough and rolling paths without even seeing them.

“Once, we were playing capture the flag in a field,” he recalls. “It was getting dark. At some point, I realized I had just run over a ditch, and I was shocked to still be running.

“Humans can run blindfolded. We have this inherent stability that keeps the whole thing going. I think people assume it’s simple because it’s common, but science hasn’t unraveled the puzzle.”

Two-legged locomotion is one of robotics’ next frontiers. For thousands of years, humans have been sketching machines shaped in our image. For centuries, we’ve been building them. But only in the past few decades have we developed a capacity to make them move like we do.

The field is in high gear right now. With the DARPA Robotics Challenge, the Defense Advanced Research Projects Agency has called upon the research community to speed up development of rescue ‘bots that could help in disasters. And on the industry side, Google in 2013 bought up eight leading robotics companies. It now owns the firms that built the top finishers in the Robotics Challenge semifinals two Decembers ago. In the finals this summer, DARPA expects around 20 teams to compete for a $2 million grand prize.

Most of the entries are humanoids, even though humans’ primary mode of transportation is one of the hardest to get right. What makes it so difficult? How are researchers doing it? And why, when bipeds are among nature’s rarest and most precarious designs, are a host of engineers set on mimicking them?

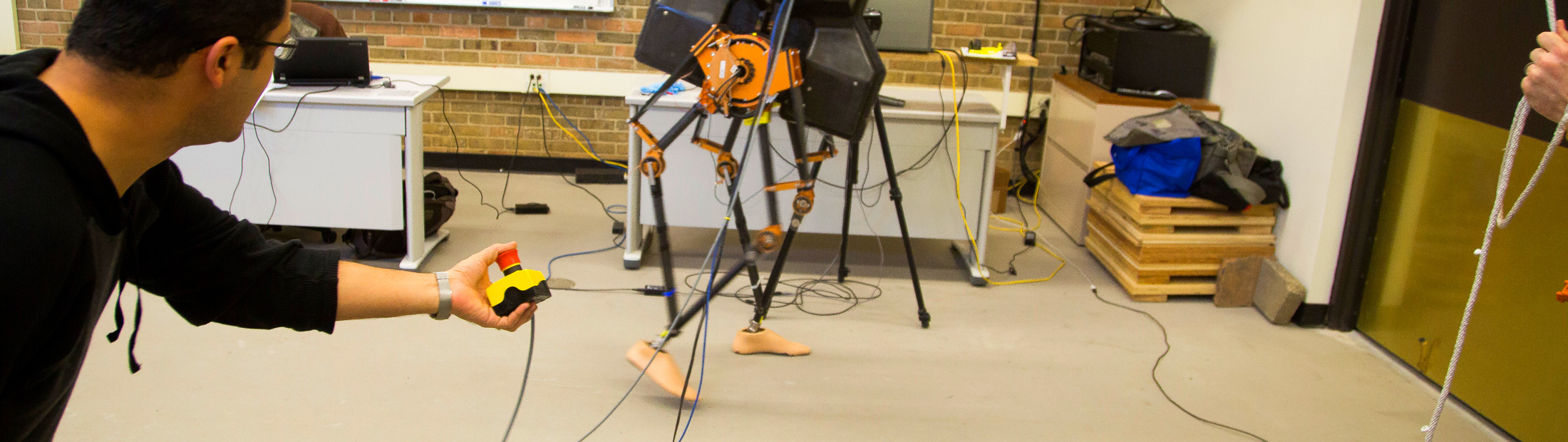

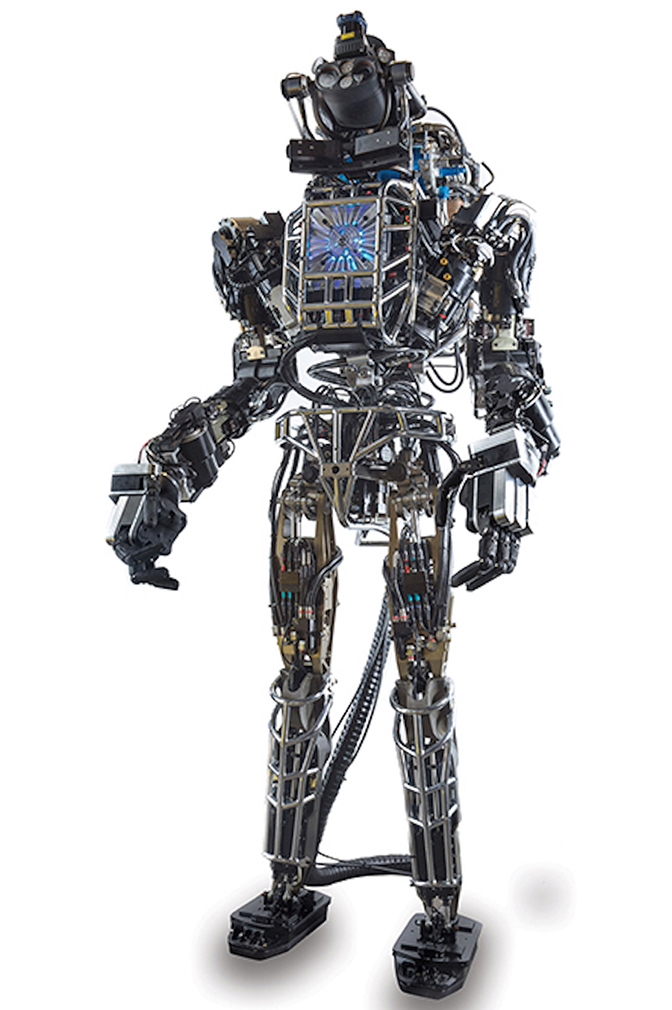

One of the world’s most advanced person-shaped robots stands at the foot of four cinderblock stairs. This iteration of “Atlas” is 6 feet 2 inches and 330 pounds, with laser eyes, hydraulic line muscles and lean legs wrapped in titanium cages. It’s named for the Greek Titan who holds up the sky.

In what looks like slow motion, the robot shifts its weight and leans to the right. It bends its left leg, lifts and sets it down on the first step. Then it hoists the rest of its frame up and stops.

“Now Atlas is going to contemplate life for a while and determine its next move,” says Jessy Grizzle, the Elmer G. Gilbert Distinguished University Professor of Engineering and the Jerry W. and Carol L. Levin Professor of Engineering in electrical and computer engineering and mechanical engineering at the University of Michigan.

Grizzle worked with Oregon State’s Hurst to make the world’s fastest two-legged robot with knees. In Grizzle’s lab, he’s playing videos of other people’s robots.

It takes Atlas more than seven minutes to ascend four stairs. That’d be a long time in a burning building. As he watches the robot, Grizzle is underwhelmed and impressed at the same time.

“This is a fantastic machine!” he says. “If it were mine, I’d be running around the block telling everyone how proud I am.”

Atlas was built by Boston Dynamics, one of Google’s acquisitions. At least six copies, each programmed by a different team, are competing in the DARPA Challenge finals. The one in the video won second place in the semifinals.

“I would show you the winner,” Grizzle says, “but all its videos have been sped up by 30 times.”

To be fair, the DARPA Challenge isn’t just about speed, at least not at the level of the individual events in what’s been called the robot Olympics. In the semifinal course inspired by the Fukushima nuclear disaster site, mechanical competitors had to drive a car, step over obstacles, move debris, open a door, climb a ladder, break through a wall with a power tool, turn valves, and connect a fire hose to a hydrant. While they were allowed half an hour for each stage in the initial round, in the finals, they’ll have just one hour to do the whole course. That’s an average of 7.5 minutes per stage.

Where speed matters more is in how fast engineers could get their machines up to the tasks. When DARPA announced the contest in 2012, no known robot could do all this with minimal supervision in unpredictable situations. The agency expects technological leaps over a few short years. That’s what its Grand Challenge in 2007 did for self-driving cars.

But disaster-response robots have a long way to go. Even at this Atlas model’s deliberative pace, its team was nervous as it climbed the stairs in 2013.

“Walking is our bread and butter, so we really wanted to do that well,” said Doug Stephen, research associate with the Florida Institute for Human Machine Cognition.

“We got a perfect score, but the whole time we were on the edge of our seats.”

Researchers at the institute have been building bipeds for decades. The approach makes sense, Stephen says, if you want robots that can operate in a world built for people.

“We have all these places designed for two legs,” Stephen says. “Bipeds are definitely harder, but a robot that looks like a human is going to be about as versatile as a human once we get the technology where it needs to be.

“But you have to make the assumption that there’s something that’s right about the human form.”

Shelves of skulls line Milford Wolpoff’s lab walls. They’re casts from our ancestors. The drawers and bins hold primate pelvises, australopithecine teeth and human leg bones.

Wolpoff is a paleoanthropology professor at U-M. He questions the engineers’ approach, and kind of gleefully.

“Why design a human?” Wolpoff asks. “We’re not the fastest runners. We’re not the most stable. We’re not anything special in our locomotion. Natural selection works with what’s already there and we have ancestors who were not human.”

Our forebears lived in the trees like modern chimpanzees. They walked on branches and hung from them. The first hominid to mainly walk upright on the ground – a trait that separates us from the apes – lived about four million years ago.

Human bodies changed over the eons to accommodate this new way of traveling. Our feet arched. Our ankles and knees strengthened. Our leg bones cantered in. Our pelvises pivoted. Our spines curved.

This evolution also left scars, Wolpoff says.

“How many people do you know that have bad backs?”

He’s one of them. In Wolpoff’s opinion, humans don’t have enough vertebrae to support the curves in our spines. We could use about five more. He knows robots don’t need vertebrae. He’s making a point – that people aren’t necessarily good models.

If rescue robots need to carry things, why not give them four legs and two arms instead of making them balance upright on two legs? Or three legs seem more stable than two. A tail could also work. That’s how the theropod dinosaurs kept their balance. Don’t forget, he says, they were bipeds too.

Do these robots need legs at all? Engineering researchers say yes because legs are more nimble than wheels or tracks – capable of stepping over rubble and up stairs.

Many researchers are looking to other animals for inspiration. Two of the DARPA Challenge contestants are modeled more after chimps than people, for example. And there’s another contingent that’s using a clean slate. To Wolpoff, that seems like the best approach.

“If you start from scratch,” he says, “you could make a much better self. These engineers are freer than they think they are. I think they’re far more constrained than they need to be.”

Maybe it can’t be helped, in a sense. Sara Kiesler, a professor of computer science and human-computer interaction at Carnegie Mellon University, points out drawings of people on cave walls and primitive dolls.

“This is something that’s ancient,” Kiesler says. “Humans have an impulse to create art, objects, sculptures and artifacts that look like the things they’re most interested in – themselves.”

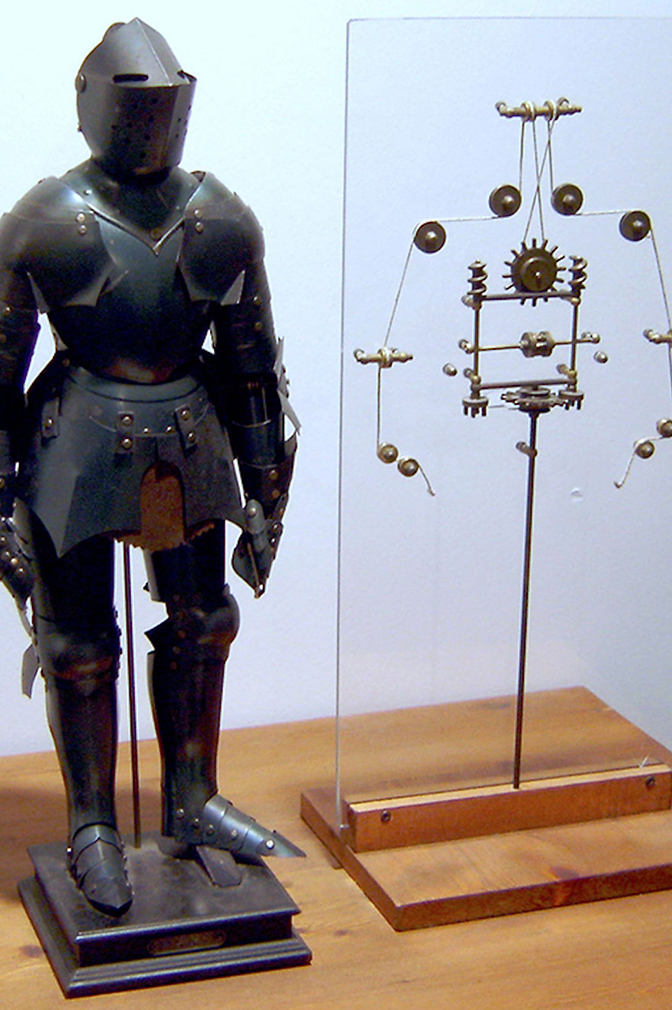

In the 15th century, Leonardo da Vinci designed the first humanoid robot in Western civilization. It was a typical Italian suit of armor outside, but inside was a network of wood, leather, brass and bronze that, when operated by cables, could sit up, wave its arms and move its head. So writes Manuel Silva in his 2007 “A Historical Perspective of Legged Robots.” The knight couldn’t walk, but it did have two legs.

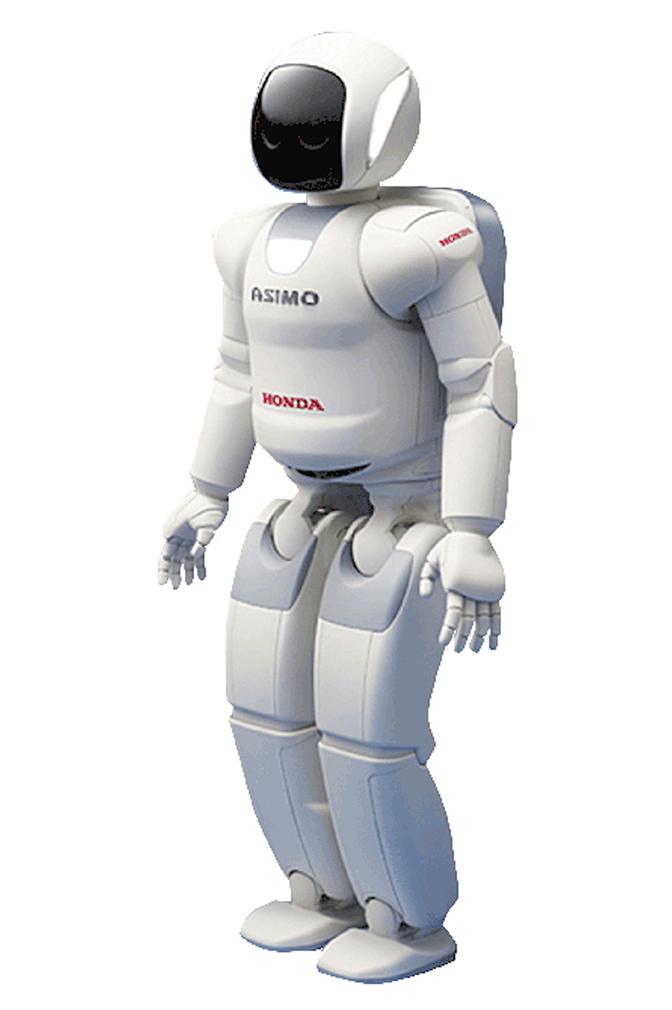

Modern research on bipedal machines picked up in the 1950s. In the 1970s, Japanese engineers built the first working prototype, dubbed WL-5. The 1980s saw early versions of Honda’s ASIMO – which today can run and climb stairs under the right circumstances – and the precursors of Atlas from the MIT Leg Lab.

Today, the DARPA Challenge is showcasing the cutting edge. The bleeding edge is at universities like Michigan and Oregon State.

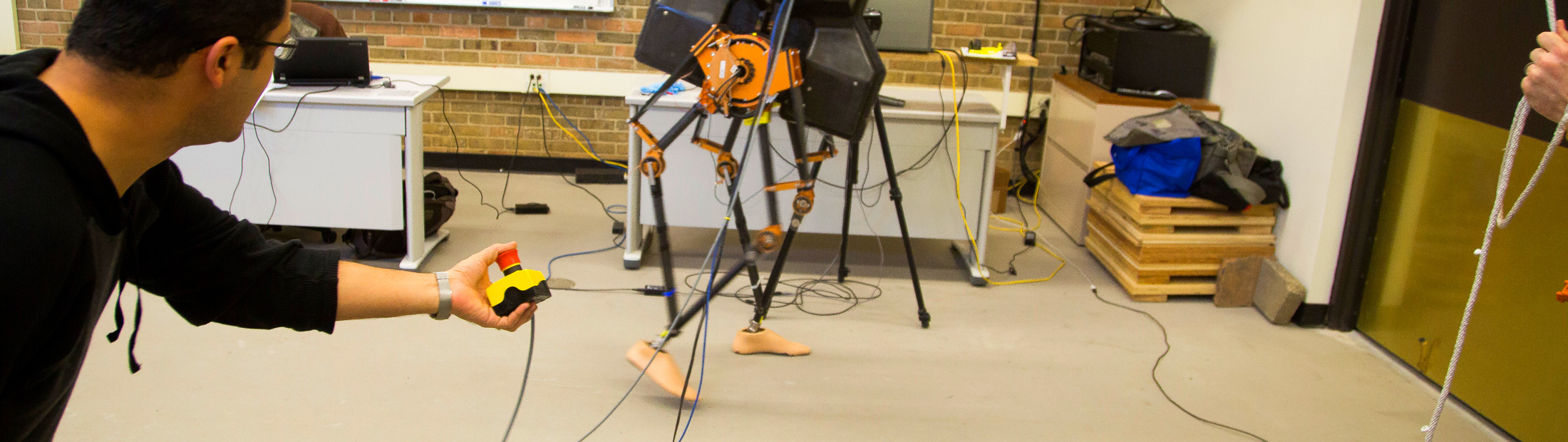

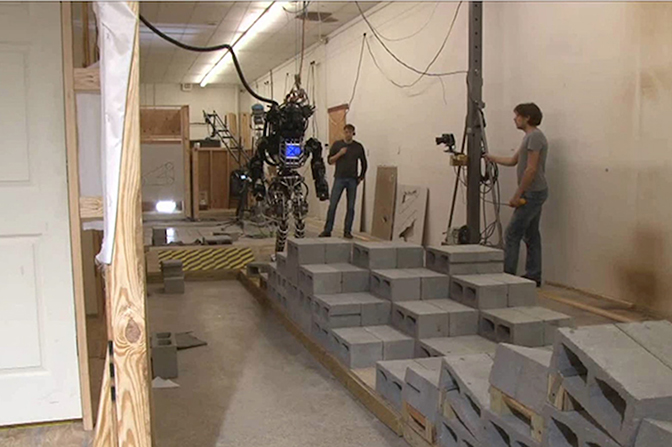

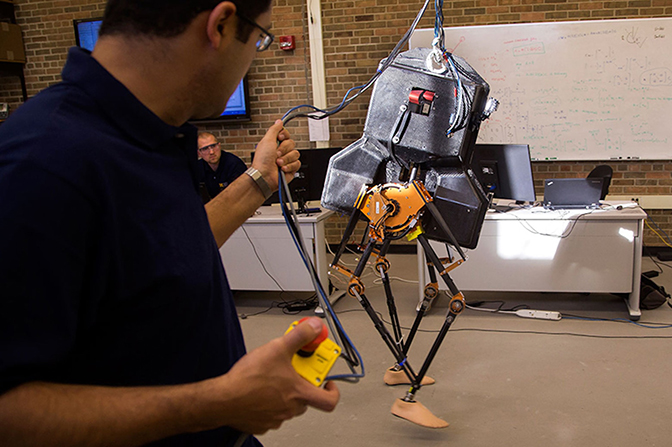

MARLO’s body looks like Darth Vader’s helmet. It doesn’t have arms. Its black, carbon fiber legs are about as light as the dried femurs in the paleoanthropologist’s office. They rest on bare prosthetic feet that help keep things balanced and give the robot a certain casual air.

This is Grizzle’s newest lab mate. It arrived in late 2013 from Hurst, who designed and built it along with two others just like it. Hurst calls them the ATRIAS series, which stands for Assume The Robot Is A Sphere. (For those familiar with the spherical cow joke, Hurst isn’t kidding.) That’s a principle he followed when designing it to match a very simple dynamic model of locomotion so that the team could readily understand and control it.

One ATRIAS stayed in Oregon with him, and another went to Carnegie Mellon. Grizzle named Michigan’s MARLO. (Naming the robots just seems right, Grizzle says. He chooses female names because he thinks that makes them sound approachable – not like killing machines.)

Each research team has a different charge and together they aim to demonstrate in three dimensions what their last robot did in two. In 2012, Popular Mechanics listed Grizzle and Hurst among the magazine’s “10 World Changing Innovators” for “teaching robots to walk.” MABEL, their last collaboration, could jog at a 9-minute-mile pace, step down from an unexpected 8-inch stair and navigate wooden planks in her path, but only in circles in a lab. She was connected to a stabilizing boom.

At 135 pounds, the ATRIAS robots are designed to walk and run efficiently in free space.

Guiding the researchers’ efforts is a theory of two-legged locomotion that Grizzle penned for MABEL and her predecessor, the French robot Rabbit. What’s special about the theory is it can reconcile in a walking system both the continuous aspects – one foot in front of the other in front of the other – and the discrete parts – when a foot hits the ground and sends impact shocks into the system.

It also treats locomotion as a “nonlinear” phenomenon, which it is. In a nonlinear system, what comes out isn’t directly proportional to what goes in. If you were to graph such a system, you couldn’t represent it as a straight line. It’s difficult for engineers to program robots with nonlinear controls, so usually they don’t. With linear control, they can still create walking and running behaviors, but not very life-like ones. Honda’s ASIMO, designed to be an in-home aid one day, uses linear control. Its steps are fluid, but it has a crouching stance and isn’t able to react in real time to surprises in its path, Grizzle says.

Grizzle plays another video. This one’s of Dutch kinetic sculptor Theo Jansen’s Strandbeest.

It’s an assemblage of PVC pipe that can walk down the beach by itself like a giant centipede.

“Pretty amazing, huh?” Grizzle asks.

The sculpture helps illustrate his theory. The Strandbeest might have a hundred legs, but they all pivot around one point.

“You see all these different angles on all these different legs, they’re all commanded by one central crank,” Grizzle says. “What we do in my lab is use sensing, computation and actuation, or motors, and feedback algorithms to replace the physical connections to the crank with virtual connections.”

His theory gives rise to equations that can describe how people walk. The geometry he’s modeling is shaped like a stick figure.

“You’re a biped,” he says. “You have a crank and a crank angle. It’s the line between your hip and toe. And all of your joints move as a function of this angle. Fundamentally that’s what walking is about – synchronizing your joints.”

When Grizzle’s robots walk, sensors measure the crank angle and feedback algorithms tell it how to move its joints as a function of that angle. The gait is human-like in its form and flexibility.

Grizzle shows a video of MABEL walking along on her indoor track. Students toss plywood slabs at her feet.

“Look at how mean the students are!” Grizzle says. “He keeps stacking it up, but she just stomps on it and keeps on going. MABEL’s not contemplating life trying to estimate where the obstacles are. She has a control system that has a high sense of balance to walk across rough terrain.”

MARLO will too, says Brent Griffin, a doctoral student in Grizzle’s lab.

“MARLO walks more like a person than a lot of other robots,” he says. “Humans aren’t necessarily in control of every single link and coordinate of our body at a given time. We’re kind of falling from step to step. But that’s OK because we have a good periodic orbit.” Our crank cycles in a steady, repeating pattern.

While he runs to put himself in his robots’ shoes, Hurst, who built MARLO, is most inspired by birds. Ground-running ostriches, turkeys, quail and guinea fowl are the descendants of upright dinosaurs.

“Humans have been on the ground for a lot less time than they have,” Hurst says. “Though the fundamental principles are the same, I believe that a lot about our gait is not as well-evolved. We’re more grounded hairless monkeys than we are refined legged locomotion machines.”

To design the ATRIAS robots, Hurst simulated birds’ running dynamics and measured the forces their steps exerted on the ground. Then he made mechanical legs that act as springs and can reproduce those forces. He recently demonstrated that ATRIAS is the first robot that exerts the same forces on the ground that an animal does when it’s walking.

Grizzle and Hurst each program their robots differently. Hurst treats ATRIAS’stepping motion as akin to a pogo stick. He lets it bounce along its own path and uses a light touch on the controls to help it rebound, after, say, stepping off a surprise curb.

While it’s not particularly important to Hurst that the legs or the gait look familiar to an onlooker, Grizzle has convinced him it matters. When the two were working on MABEL, for a time each had half of the robot in his lab as a one-legged hopper they called Thumper. Hurst designed it so its knee bent backwards, like a flamingo’s.

“Jessy had an important insight,” Hurst says. “He turned his knee around. Practically and pragmatically it worked better the other way, but people didn’t like it as much.”

People care more about something when it looks human, Hurst says. Students get excited about it. Reporters write stories about it.

“Then when you’re applying for grants, funders already have an introduction to your scientific claims,” he says. “And that’s how you keep a research program going. Never again will I build a machine from purely an engineering stance.”

So it’s partly psychological – why humanoids are designed as they are. Grizzle hints at this when he describes what it’s like to watch MABEL run.

“It’s simultaneously familiar and bizarre to see a machine move like you,” he says. “The legs are moving like yours, but it’s weird. It’s metal. It’s banging around. Its shape is sort of like yours, but not really. Yet it moves with grace like it has a sense of purpose in there. You root for it.”

Studies have shown what he knew instinctively. Robots that resemble living beings, and especially people, can evoke feelings in a way that other mechanical devices don’t. A lot of this has to do with the machines’ shape in both positive and negative space.

Selma Sabanovic studies “social” machines that might one day be companions or pets. As an assistant professor of informatics and computing at Indiana University, she has spent time with the Japanese researchers at the Humanoid Robotics Project sponsored by the nation’s government. Like Honda’s ASIMO, researchers envision the HRP series as domestic or office helpers. HRP machines might also serve as construction workers.

“What I find fascinating,” Sabanovic says, “is that these robots are embodied and they’re designed to inhabit the same space as humans. They’re in a different realm than a cell phone or a computer, and that has a significant psychological effect on people.”

People interact with robots in a way that’s different from other mechanical devices. (It’s hard to even call a robot a “device.”) They often treat them kindly and expect others to do the same.

Robots might belong to a unique category that’s in between a person or animal and a thing. Where this line is and how technology is blurring it is a focus of Rachel Severson’s research. Severson is a developmental psychologist and postdoctoral research fellow at the University of British Columbia.

Usually by the age of seven, children understand what’s alive and what isn’t, Severson says. They know how to classify trees even though they don’t move.

“But we’re finding that well beyond this age when they should have figured it out, they’re attributing internal states to the robots, like emotion. They believe they can think, they can be friends and they deserve to be treated in a way that is moral.”

In Severson’s studies, adults don’t admit this when they’re interviewed, but they show signs when she watches how they interact with robots. They seem on some level to believe they have a will.

In some cases, this is what their designers intended.

“I wouldn’t say this is true for all of them, but for some roboticists I know, there’s a real curiosity around recreating the human form and the technical challenges it involves,” Severson said. “There’s a real curiosity around creating life.”

Researchers say there are a host of reasons to make robots that walk on two legs and move like humans. It seems practical from both engineering and psychological standpoints regardless of whether the machine would be rescuer or butler.

People-shaped robots fit more seamlessly into our world – a world that’s full of constraints, just like our bodies and the machines we make with them. Whether or not there’s anything “right” about the typical human form, it’s the most sophisticated form we know. Or at least we often think it is. It’s the only form we’re aware of that can use its intellect to extract from its environment the means to make anything in its own image. So perhaps the answer to “Why are we doing this?” is “Because we can.”